Kscope 17 Recap - Day 3

I have got to start getting to sleep earlier but I have been having too much fun meeting with people. So yea I missed the first round of sessions but honestly, it was a conscious decision, nothing looked very interesting to me.

So the first session for me is "Advanced Calculation Techniques: Going Beyond the Calc Dim" with the GAP. George Cooper talked about some different strategies to improve calculation performance especially with regards to Hybrid Essbase.

First Cross dimensional operators are not supported in Hybrid, however similar functionality can be achieved by using the Sumrange function. Additionally a calculation can go across multiple dimensions by chaining Sumrange functions together.

Example 1:

"A100"=Sumrange("Rate Amt", @List("Template Input")

Example 2 (chaining):

"Test1" = @SumRange("rate", @List("E100"))

"Test2" = @SumRange("test1", @List("E100"))

This process is similar to sub-query in SQL so member in the chain the performance impact multiplies.

When optimimizing ASO or Hybrid calculations it is probably necessary to set the MAXFORMULACACHESIZE setting in the Essbase config.

Last Gary explained that the default calculation mode for Hybrid is TopDown which breaks functionality to for the @Prior function. The default setting can be overriden by using the calculation mode of BottomUp, once this is done @Prior will work as it does by default in BSO.

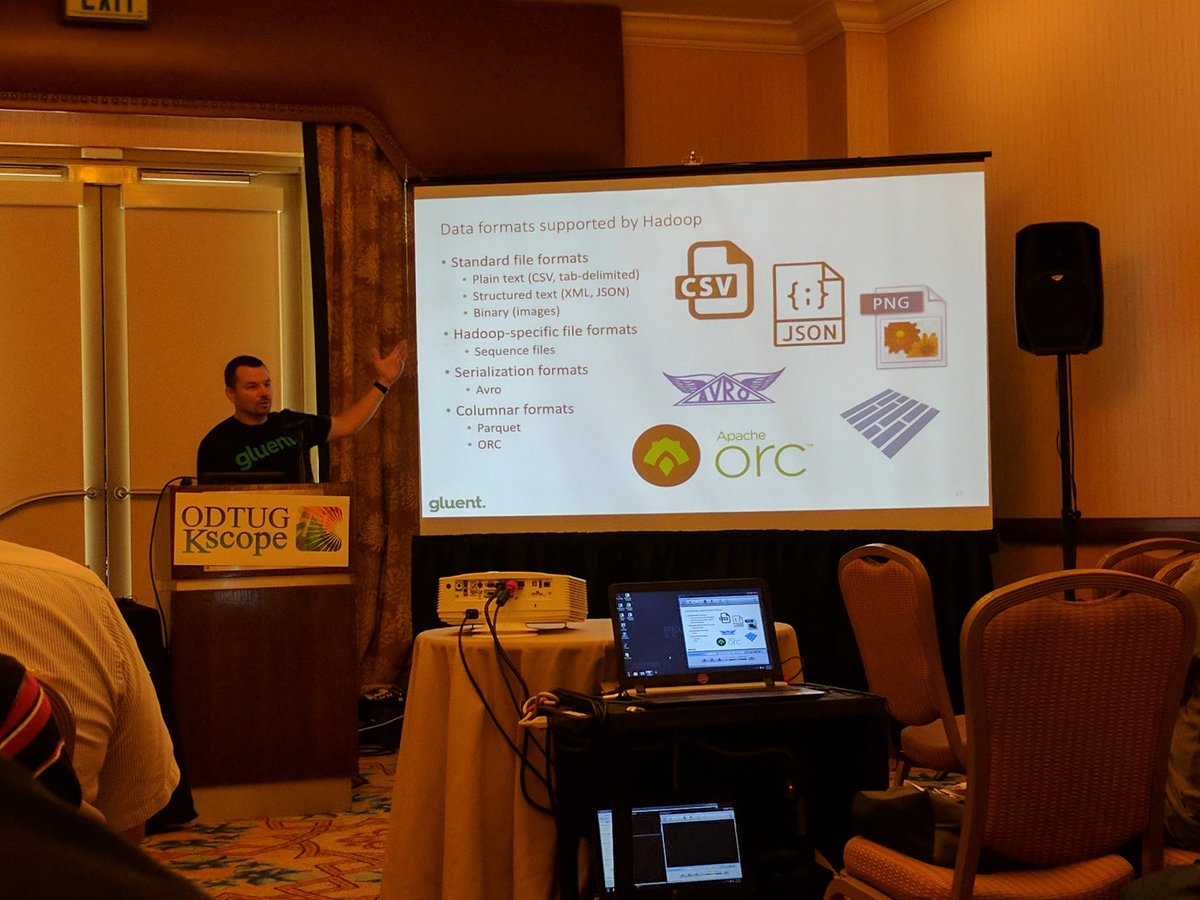

Next up I visited Michael Rainey's session "SQL on Hadoop for the Oracle Professional". Michael explained how the structure of Hadoop is different from traditional databases. Traditional enterprise setups generally use SANs which adds a layer between the database engine and the database data. Hadoop is structured with a name server and several data nodes, the nodes replicate the data at least 3 times across the nodes which provides fault tolerance and makes it ideal for use on commodity servers. When new nodes are added the system can reconfigure the distribution of data. The name server stores the metadata for the environment. When queries are run the name server will direct the client to a data node with the correct data. Since the nodes house the data and the database engine they can in ideal conditions be much faster than traditional setups. SQL for Hadoop is the logical next step since SQL is commonly understood language and many Hadoop database engines support it in various degrees. Michael did point out that due to the decentralized nature of Hadoop complex queries can be difficult to process since servers have to run various parts of query but will have to replicate the results to other nodes to allow for complex filtering.

After lunch a friend and I attempted to attended "Extreme Essbase Calculations: New Frontiers in What-If, Goal Seeking and Sensitivity Analysis". Unfortunately we lost interest after about 15 minutes and decided to find the coffee shop and go walk the Exhibit hall. On the plus side this gave us time to check out some cool new products. Appvine is pretty interesting in that it brings the power of Excel for Essbase to the web. Stopped by Incorta again, still amazed. This also gave me time to visit some friends. Big thanks to John A. Booth and Ash Jain. Also was awesome to see Doga Pamir, by the way congrats on taking home two speaking awards (Best New Presenter and Best Financial Close Presentation).

Last I attended Francisco's "Black Belt Techniques for FDMEE Admins and Developers". This was by far the best FDMEE presentation (and it won the award for that). I wish I had taken more notes but I will be sure to download the slides later. Francisco covered a ton of techniques and any summary I give at this time will not do it justice.

Wrap Up

I really want thank the conference committee, they did a amazing job of making Kscope17 a success. I am really looking forward to next year.

Now for some random photos from the special event and after party.

So that's a wrap from a couple of the EPM Junkies, see you next year.